A company gets hit with ransomware. The CISO walks into the boardroom.

The CFO asks one question: "How much does this actually cost us?" The CISO says, "high risk." The CFO stares. Nothing happens, and no budget gets approved.

This is not a hypothetical situation. It plays out in boardrooms regularly.

The problem is not that the risk was unknown. The problem is that no one has done the work of risk quantification. Without it, risk stays vague.

Vague risk neither gets resources nor drives informed decision-making.

If your risk story needs to hold up in a boardroom, talk to an expert right away!

But for the readers, this article covers the full picture. You will learn what risk quantification is, why it matters to your business, which methods and models work, how the FAIR framework operates, how to run the process, and how to choose tools that actually help.

What Is Risk Quantification?

Risk quantification is the process of turning risk into a number. A financial number. Instead of saying a risk is "high" or "medium," you express it as a potential dollar loss. That shift changes how your business responds to risk entirely.

This is not a new idea. Actuaries and financial analysts have quantified risk for decades. What is new is applying this discipline to cyber risk and business operations in a structured, repeatable way. That is where the field of risk quantification has grown most.

The definition of risk quantification is simple.

You take a threat, estimate how often it might happen, then estimate the financial damage if it does. The result is a loss estimate that your business can actually use:

Risk = Likelihood of Event x Financial Impact

So if a ransomware attack has a 10% chance of hitting your business and would cost $2M if it did, your risk exposure from that threat is $200K. That is what goes into budget conversations, not "high."

That loss estimate feeds into budget decisions, shapes cyber insurance choices, and helps leadership prioritise. Without quantification of risk, those decisions rest on opinion.

Cyber risk quantification, often called CRQ, is the application of this to digital threats. Think of it as a dollar-value report on your cyber exposure. CRQ turns "we could get breached" into a measurable range of probable loss.

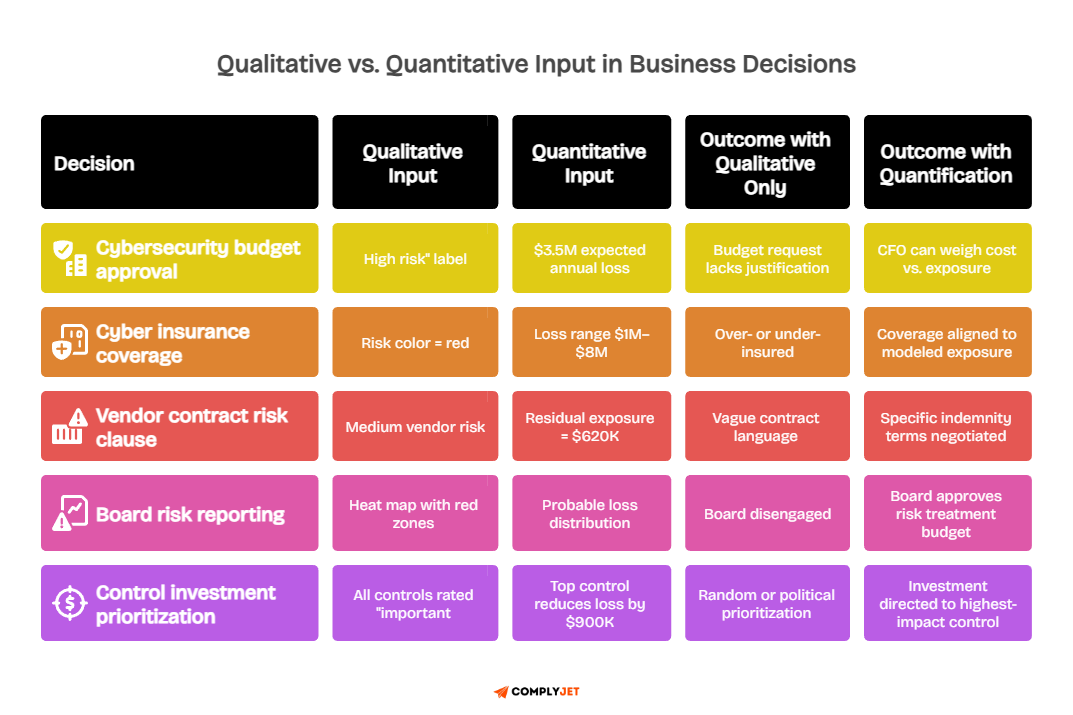

Why Risk Exposure Cannot Stay Qualitative

Most organisations still use colour-coded matrices. Red means bad. Green means fine.

The problem is that "red" for a $50M company and "red" for a $5B company mean very different things. Qualitative labels do not scale with the size or nature of the business.

When you assign dollar values to risk, you can compare risks directly. You can weigh a $500K cyber risk against a $200K operational risk. That is how you make real budget decisions.

That is called informed decision-making in actual practice.

Risk exposure has real financial weight. A data breach, a compliance failure, a vendor outage, each has a cost. According to IBM's Cost of a Data Breach Report, the average cost of a data breach globally continues to climb year over year. Risk quantification makes that cost visible before the event, not after.

Why Businesses Can No Longer Afford to "Estimate" Risk

Most businesses still manage risk by estimation. Someone looks at a threat, labels it "likely" or "unlikely," and moves on.

This approach made sense when cyber threats were rare, and boards did not ask hard financial questions. Neither of those things is true anymore.

Risk quantification fills the gap between gut instinct and financial reality. When you know the potential financial impact of a risk, you stop guessing about what to fix first. You start making decisions the same way a CFO makes investment decisions: with numbers.

The Real Cost of Staying Qualitative

A qualitative risk assessment tells you a risk is "high." It does not tell you whether that high-risk costs $100K or $100M.

Those two numbers call for very different responses. One might justify a small control investment. The other justifies an enterprise-wide program.

The business impact of misreading this gap is measurable. Resources go to the wrong risks. Boards approve budgets they cannot justify.

Cyber threats that could have been contained become full incidents. The financial impact shows up in breach costs, regulatory fines, and customer losses.

The Vendor Risk Problem

Third-party risk or vendor risk is where qualitative programs fall apart fastest. When you rely on a vendor, their failure can become your responsibility. How organisations quantify residual vendor risk has become one of the most important questions in enterprise risk programs today.

Residual risk is what is left after your controls are applied. You cannot reduce it to zero. But you can measure it using a straightforward idea.

You can assign a financial value to the exposure that remains after factoring in your vendor's controls. That number tells you whether to accept the risk, transfer it, or require improvement.

Residual Risk = Inherent Risk x (1 - Control Effectiveness)

For example, if a vendor relationship carries $1M in inherent risk and your controls are 70% effective, your residual exposure is $300K. That number tells you whether to accept the risk, transfer it through insurance, or require the vendor to improve.

Informed decisions about vendor relationships require knowing what a vendor failure actually costs you. Not "high." A number. A number. Potential risks tied to third parties need the same financial rigour as internal cyber risks.

Use our third-party risk management guide to start measuring your third-party exposure.

Quantitative vs. Qualitative Risk Analysis: What Is the Difference?

These two terms get used interchangeably. They should not be.

Qualitative risk analysis and quantitative risk analysis produce different outputs and serve different purposes. Knowing when to use each is a core skill in any business risk program.

Risk analysis definition at its broadest: the process of identifying, estimating, and evaluating the potential effects of a risk. Both qualitative and quantitative approaches do this, just at very different levels of precision.

What Qualitative Risk Analysis Does

Qualitative risk analysis uses subjective scoring. Risks get rated as high, medium, or low based on expert judgment. It is fast. It does not require much data.

It works well as a starting point when you are mapping a new risk landscape.

The weakness is consistency. Two analysts looking at the same threat can reach different ratings.

A board member in finance and a CISO will often disagree about what "high" means for a given business impact. Qualitative labels do not translate cleanly into financial decisions.

At some point, leadership needs to know the dollar exposure. Not the colour of a matrix cell.

What Quantitative Risk Analysis Does

Quantitative risk analysis uses numbers. It estimates how likely a threat is and what it would cost if it happened. The output is a range of potential losses expressed in dollar terms. Harder to produce, but far easier to act on.

A practical way to think about it: if a threat occurs roughly once every two years and costs $500K when it does, your average expected loss per year is $250K.

That is the number that belongs in a budget conversation.

How to quantify risk in a risk assessment using quantitative methods comes down to three inputs: how often a threat event occurs, the probability that your controls fail, and the magnitude of the resulting loss. Once you have those, you can calculate a meaningful risk range.

The NIST Risk Management Framework provides detailed guidance on structuring this kind of quantitative analysis.

Using Both Together

The strongest risk programs use both methods. Qualitative analysis helps you screen and prioritise quickly. Quantitative risk analysis then helps you make precise decisions about the risks that matter most.

Think of qualitative analysis as your radar and quantitative analysis as your targeting system. The radar finds the threats.

The targeting system tells you which ones to address first and what it costs to fix them. See how leading security teams combine both approaches in practice.

Core Risk Quantification Methods and Techniques Every Business Should Know

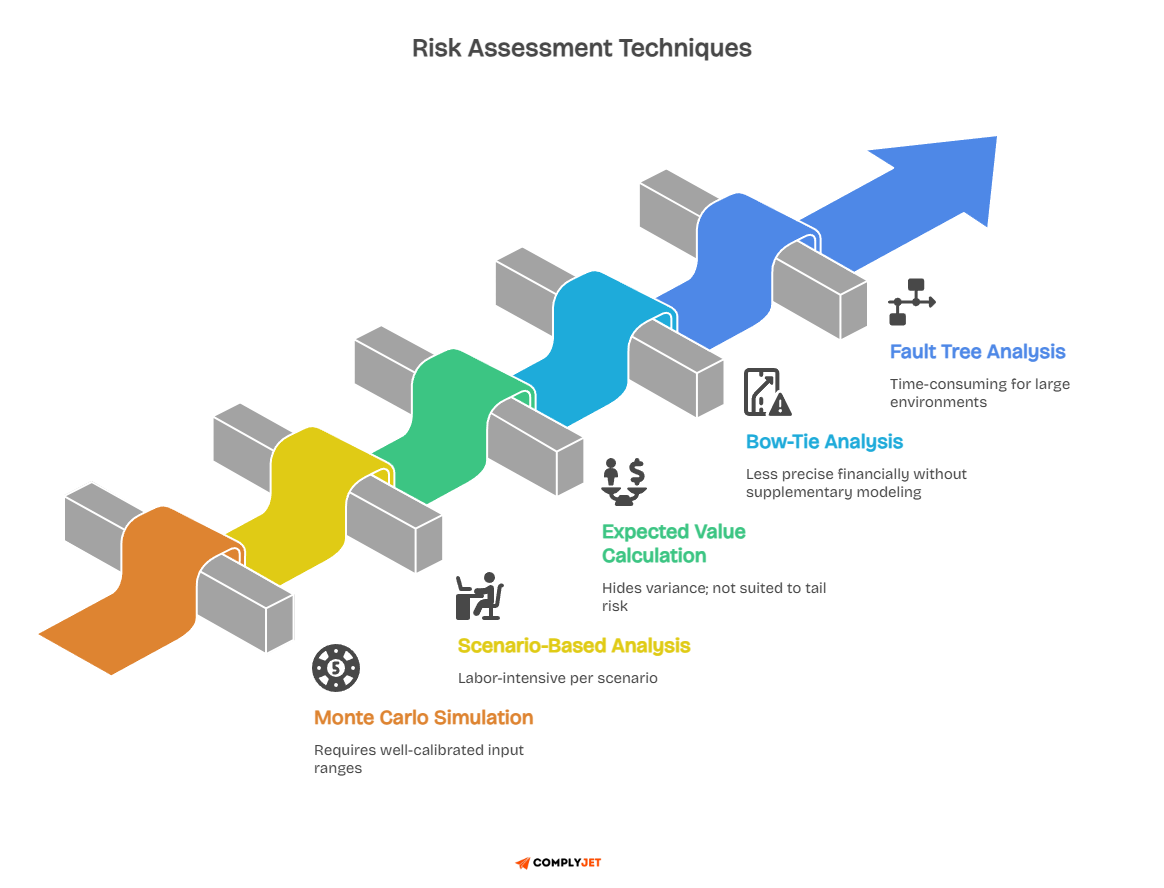

Risk quantification methods are the tools that turn raw data into financial estimates. Each method works in a different context. Understanding the main ones helps you choose the right approach for your program.

Monte Carlo Simulation

Monte Carlo simulation works by running thousands of hypothetical scenarios. Each run uses slightly different values for how often a threat occurs and how much it costs, drawn from a realistic range based on your data and experience.

The output is not one number. It is a spread of possible outcomes. You might find there is a 15% chance of a loss exceeding $4M, and a 70% chance it stays below $1M. That range is far more honest than any single estimate because real incidents do not play out the same way twice.

A ransomware attack can cost $50K or $50M, depending on timing, response speed, and data sensitivity. Simulation captures the full picture rather than forcing one answer.

The FAIR Institute has extensive documentation on how Monte Carlo methods apply specifically to cyber risk quantification.

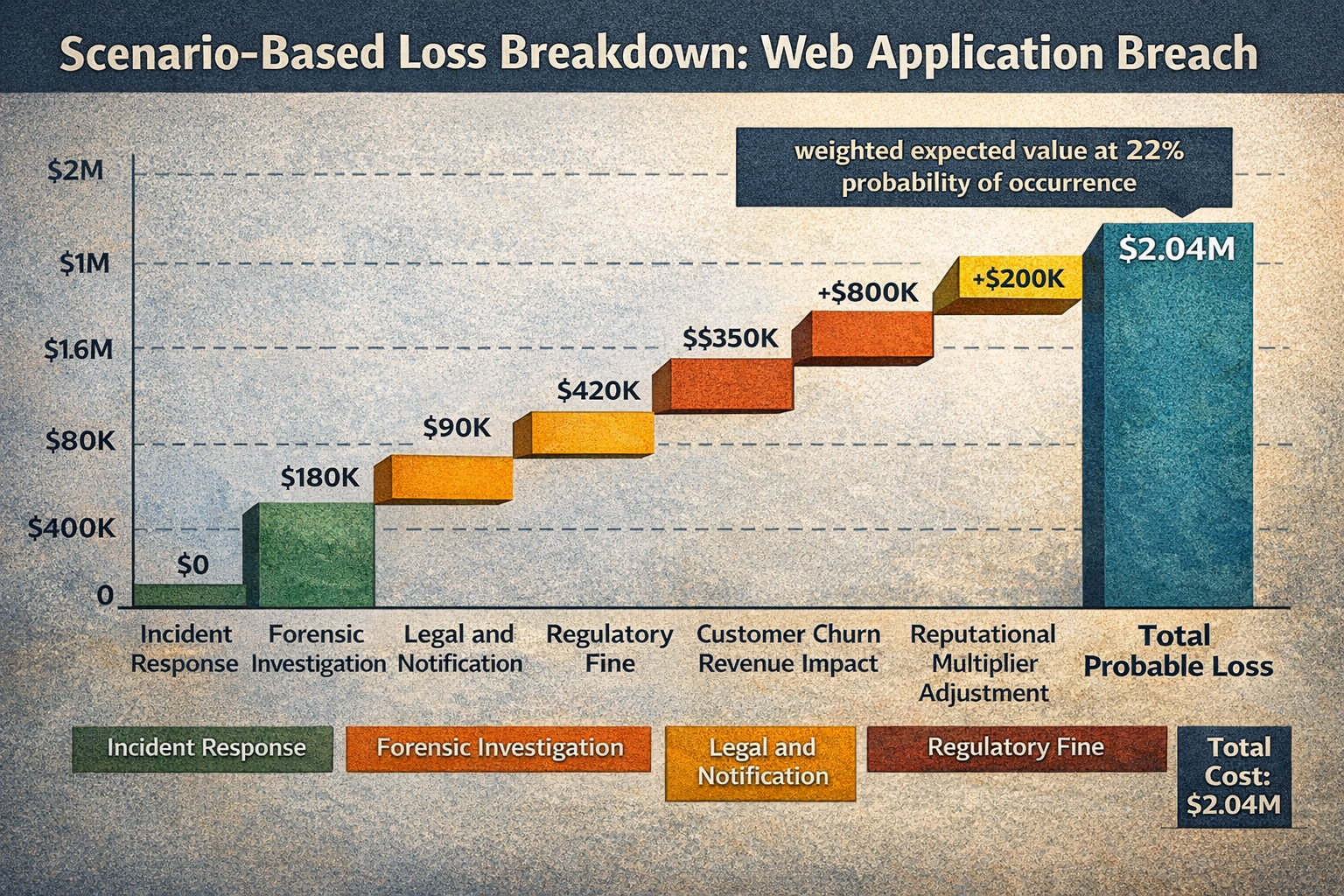

Scenario-Based Analysis

Scenario-based analysis builds a specific threat story. You describe a realistic event, like a vendor breach that exposes your customer data, and then walk through every cost that follows: legal fees, notification costs, regulatory fines, and lost revenue.

You then weight the total by the probability it actually happens to get your expected loss from that scenario:

Expected Scenario Loss = Total Scenario Cost x Probability of Occurrence

What makes this method powerful is that it forces specificity. You are not debating "data breach risk" in the abstract. You are looking at one event with a calculated financial outcome, which makes it far easier for leadership to engage and act.

The MITRE ATT&CK framework can help you build realistic threat scenarios by mapping known adversary tactics to your specific environment.

Expected Value Calculation

Expected value is the simplest approach. If there is a 20% chance of a $1M loss, the expected value is $200K. Simple to explain, useful as a quick baseline.

It works best when you have solid estimates of both probability and impact. It breaks down when probabilities are highly uncertain, which is common with newer or evolving attack types. Use it as a starting check alongside more detailed risk quantification methods, not as your only tool.

Bow-Tie Analysis

Bow-tie analysis places the risk event at the centre. On the left, you map what causes it. On the right, you map what consequences follow. Your controls sit on both sides, showing where they prevent causes and where they limit damage after the fact.

This method is especially useful when you want to understand how one failure cascades into multiple outcomes.

It makes the connections visible before you start calculating, which makes everything that follows more accurate. It also integrates cleanly with the CIS Controls framework, which gives you a standardised way to evaluate where your controls sit on the bow-tie diagram.

Methods give you the mechanics. But to risk quantification at scale inside a business, you need a model.

A model gives you a consistent structure to apply these methods repeatedly and get comparable outputs. That is the focus of the next section.

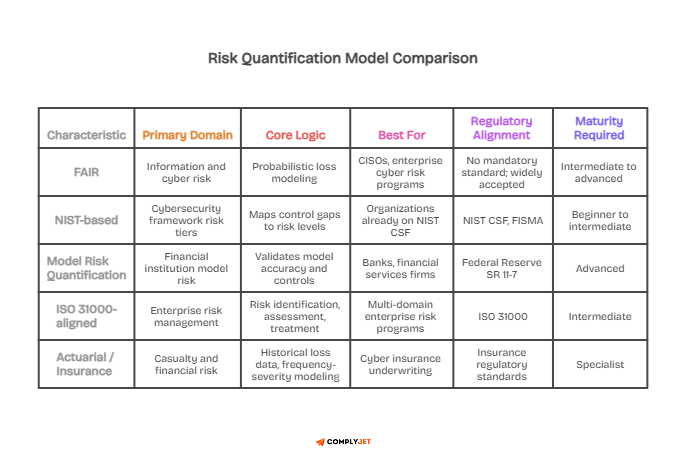

Risk Quantification Models: Choosing the Right Structure for Your Business

A risk quantification model is a structured approach that defines how you collect, process, and present risk data. Methods are the techniques.

Models are the frameworks that govern how you apply those techniques consistently over time.

What Makes a Risk Quantification Model Useful

A useful model does three things. It tells you what data to collect. It specifies how to turn that data into a financial estimate. And it produces outputs that people outside the security team can understand and act on without needing a glossary.

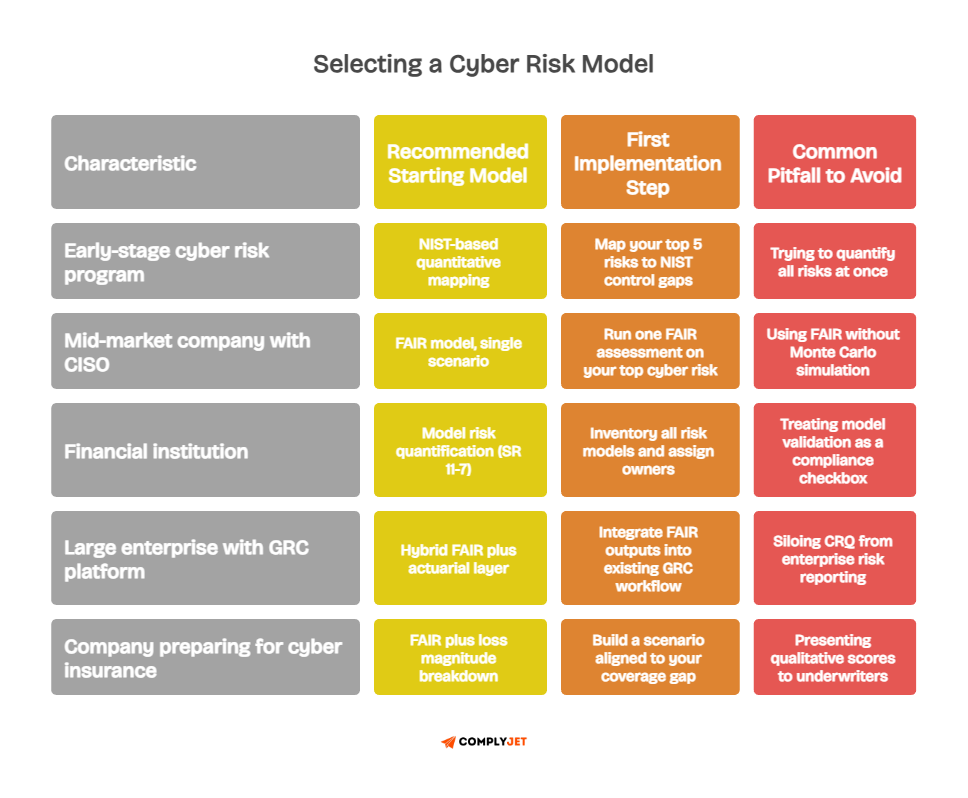

The FAIR risk model is the most widely recognised model for cyber and information risk.

NIST-based quantitative approaches pair well with the NIST Cybersecurity Framework. Financial institutions often work within model risk frameworks tied to their specific regulatory environment.

Selecting a Model for Your Context

If your primary concern is cyber and information risk, FAIR is the natural starting point. If you operate in a heavily regulated financial environment, you may need a model aligned to your specific regulatory expectations.

The Risk IT Framework is another option worth reviewing for governance-heavy environments.

Whatever model you choose, the quality of the output depends entirely on the quality of the data you feed it. You cannot model what you have not inventoried.

This is why the process matters as much as the model itself. Start with a structured cyber risk assessment to build the data foundation your model needs.

The FAIR Framework: The Gold Standard for Cyber Risk Quantification

FAIR stands for Factor Analysis of Information Risk. It is an open standard for quantifying information risk in financial terms, developed by Jack Jones and maintained by the FAIR Institute.

FAIR gives you a structured way to break risk down into components that can actually be measured and expressed in dollars.

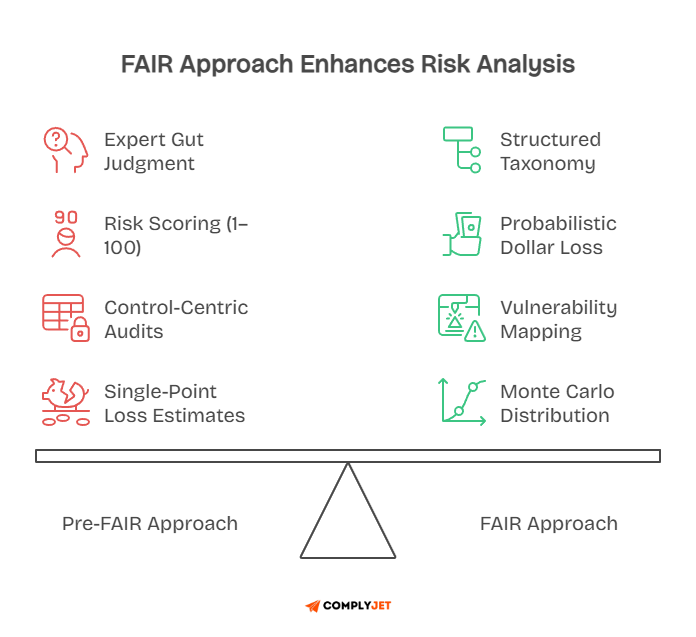

Before FAIR, most cyber risk analysis was qualitative. FAIR changed that by introducing a model that treats risk as a function of frequency and magnitude, not colour codes.

What Is FAIR and Why It Matters

At its core, FAIR defines risk as a product of two things: how often a loss event occurs, and how large the loss is when it does.

Risk = Loss Event Frequency x Loss Magnitude

The insight is in how it breaks each of those down further. FAIR separates threat events from loss events. A threat event is any action a bad actor takes toward your assets.

A loss event is one that actually results in financial damage. Not every threat becomes a loss. FAIR builds that distinction into the model, which makes it more honest than methods that treat every alert as a guaranteed incident.

The FAIR Institute publishes a growing library of guidance, case studies, and practitioner resources for organisations adopting this methodology.

How the FAIR Model Works

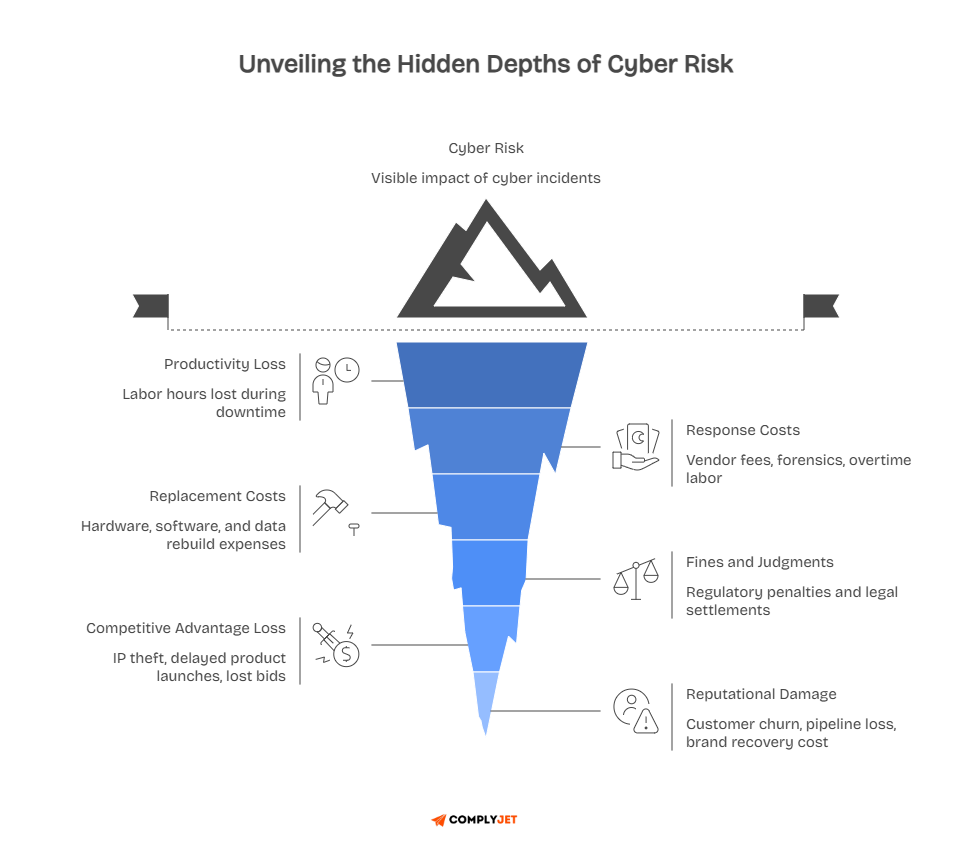

FAIR breaks loss into six categories: productivity loss, response costs, replacement costs, fines and judgments, competitive advantage loss, and reputational damage. Each can be estimated separately and combined into a total.

Crucially, FAIR uses ranges rather than single numbers for each input. Instead of saying "this attack costs $500K," you estimate it costs somewhere between $200K and $1.5M, depending on circumstances.

That honesty about uncertainty is one of the reasons FAIR has become the industry standard. The Open Group's Open FAIR Body of Knowledge formalises these definitions and is freely available for practitioners who want to go deeper.

A FAIR assessment typically starts with one specific risk scenario. You pick one threat type, one asset class, and one category of loss.

You gather data, run it through a Monte Carlo simulation across your estimated ranges, and get a distribution of probable losses.

FAIR Analysis in a Business Context

A FAIR analysis for a mid-size company might examine an external attacker gaining access to customer data through a web application. You estimate how often attackers target similar companies. You assess your vulnerability given your current controls. You model the loss across response costs, legal fees, and regulatory fines.

The output might tell you there is a 20% chance of a loss exceeding $3M in any given year from that one scenario. A board can make an insurance decision from that.

A CISO can prioritise remediation against it. That is the kind of output that actually moves decisions. Now, let’s see what else is there.

What Other Risk Quantification Standards and Frameworks Govern the Field

Risk quantification does not exist in a vacuum. It operates within a broader ecosystem of standards and frameworks that define how organisations should identify, measure, and manage risk. Knowing which standards apply to your business helps you build a program that holds up to scrutiny.

Standards matter for another reason, too. When a regulator, auditor, or board asks how you measure risk, pointing to a recognised framework adds legitimacy to your numbers.

A well-chosen risk quantification framework signals that your program is principled, not improvised.

ISO 31000: The Global Risk Management Standard

ISO 31000 is the international standard for risk management. It provides principles and guidelines that apply across any industry or organisation size. It does not prescribe specific risk quantification techniques, but it defines the structure within which quantification should happen.

The standard covers the full risk management cycle: scope, context, criteria, assessment, treatment, and monitoring.

Risk quantification fits within the assessment phase. ISO 31000 reinforces that risk assessment methodologies should be appropriate to the context and produce outputs that support informed decision-making.

For businesses operating across multiple countries or industries, ISO 31000 provides a common language.

When your risk quantification process aligns with this standard, your outputs are more defensible to external stakeholders and easier to compare across business units. It also complements ISO 27001, which governs information security management and is often implemented alongside a formal risk quantification program.

NIST SP 800-30 and the Cybersecurity Framework

NIST SP 800-30 is a US government publication specifically designed to guide risk assessments in information systems. It provides a structured approach to quantitative risk assessment that many federal agencies and private organisations follow.

The NIST framework pairs naturally with SP 800-30. The framework identifies five functions: Identify, Protect, Detect, Respond, and Recover. Risk quantification feeds directly into the Identify and Protect functions by putting financial figures behind the threats your controls are designed to address.

Learn how to map your existing controls to the NIST Cybersecurity Framework.

For cyber security risk quantification specifically, NIST guidance is one of the most commonly referenced bodies of work. It is particularly useful for organisations in regulated industries where demonstrating a structured, documented risk quantification process is a compliance requirement.

COSO ERM and Enterprise Risk Management Standards

The COSO Enterprise Risk Management framework takes a broader view. It connects risk management to strategy and business performance. Where NIST and FAIR focus heavily on cyber and information risk, this framework addresses enterprise risk across all dimensions: strategic, operational, financial, and compliance.

For organisations that need to align their quantitative risk management program with enterprise governance structures, this is the relevant reference. It defines how risk appetite and risk tolerance are set at the board level and how those thresholds should drive quantification targets across the business. Read our guide on building a board-aligned enterprise risk management program.

Think of it this way. FAIR tells you what a specific risk costs. COSO tells you how much risk your organisation is willing to accept in the first place, and who is accountable for that decision.

You need both to run a mature program. The ISACA COBIT framework also pairs well with this framework for organisations that need governance and management of enterprise IT risk in a single integrated structure.

OCTAVE and Operationally Driven Frameworks

OCTAVE, which stands for Operationally Critical Threat, Asset, and Vulnerability Evaluation, was developed at Carnegie Mellon University. It is a risk assessment methodology designed for organisations that want to conduct risk assessments with internal teams rather than external consultants.

OCTAVE Allegro, the most widely used variant, focuses on information assets and the environments in which they exist. It is less mathematically intensive than FAIR, making it a useful starting point for organisations early in their quantitative risk journey.

Download the full OCTAVE Allegro methodology guide.

It also pairs well with FAIR in practice. Use OCTAVE to identify and scope your risk scenarios, then apply FAIR to quantify them financially.

That combination gives mid-size businesses structure without the overhead of a full enterprise risk platform, and still produces financial outputs that leadership can actually use.

See how to combine OCTAVE and FAIR in a practical risk assessment workflow.

How These Frameworks Work Together

No single framework covers everything your business needs. The strongest risk quantification programs draw from multiple standards.

ISO 31000 provides the governance structure. NIST SP 800-30 guides the technical risk assessment methodology. FAIR provides the quantification model. COSO ERM connects it all to board-level strategy.

Choosing the right combination depends on your industry, regulatory environment, and organisational maturity. A financial services firm operating under banking regulations will lean more heavily on NIST and COSO.

A technology company focused on cyber security risk quantification may find that FAIR and the NIST cover most of what they need.

Explore our framework-specific guides to find the right fit for your business.

The key is consistency. Whichever frameworks you adopt, apply them consistently across your program. Mixed methodologies that shift from one assessment to the next produce outputs that cannot be compared or aggregated meaningfully.

Consistency is what turns individual risk scenarios into a coherent enterprise risk picture.

Understanding the standards that govern risk quantification gives your program legitimacy and structure. But standards do not run themselves. You need a process that operationalises them inside your business. That is exactly what the next section walks through.

The Risk Quantification Process: Step by Step

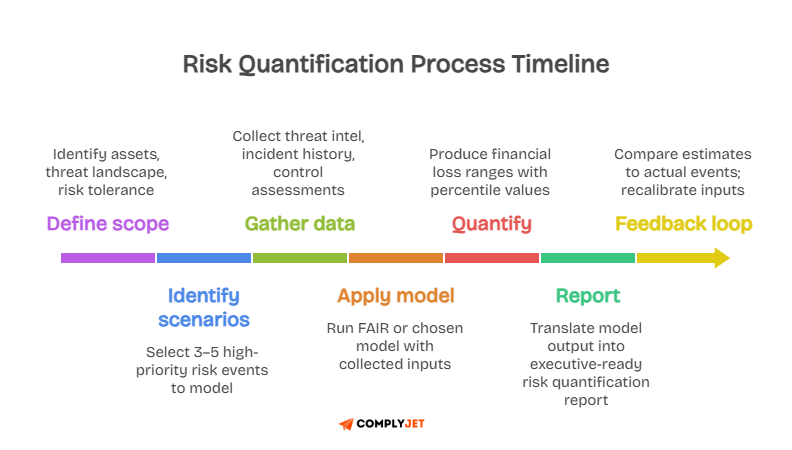

The risk quantification process is not a one-time event. It is a repeatable cycle that keeps your view of risk current. Setting it up correctly the first time saves significant effort in every cycle that follows.

1. Building the Foundation

The first phase is knowing what you are protecting. You cannot estimate the cost of losing something you have not inventoried. This means cataloguing your critical data, systems, processes, and third-party dependencies.

A structured asset inventory is the first step in any credible risk quantification program.

Most organisations stumble here because they try to quantify everything at once. That produces shallow analysis across too many scenarios.

Start with three to five key scenarios and build from there. Deep analysis of the risks that matter most is far more valuable than light analysis on everything. The NIST framework's's Identify function gives you a solid structure for this initial scoping work.

2. Running the Quantification

Once your scenarios are defined, you gather data to populate your model: internal incident history, industry breach data, threat intelligence, and an honest assessment of how effective your current controls actually are.

A practical check before approving any security investment is to ask whether it pays for itself. If a $400K tool reduces your expected annual loss by $150K, it is not a sound investment.

Risk quantification gives you the evidence to see that clearly before you spend the money, rather than after. Use this investment prioritisation logic to build a business case for your next security initiative.

The output of this stage is your risk quantification report: probable loss ranges, the scenarios driving the most exposure, and the controls that would most reduce it.

That report goes to the board, the CFO, and the audit committee. See what a board-ready risk quantification report looks like in practice - Book a demo!

3. Keeping the Process Current

Threats evolve. Your environment changes. A model that was accurate six months ago may be significantly outdated today.

Build in a quarterly review at a minimum to refresh your key scenarios with current data. The MITRE ATT&CK framework is a useful reference for keeping your threat scenarios aligned with how real-world adversary tactics are evolving.

When an incident occurs, compare what actually happened to what your model predicted. If there is a large gap, update your inputs.

Over time, this calibration process makes your estimates sharper and your decisions more reliable. Each cycle produces better data, which produces more accurate estimates, which produce better decisions. That compounding effect is the long-term value of a mature CRQ program.

How to Quantify Specific Risk Types in a Business

The same core approach applies across risk types. You identify the threat, estimate how often it might happen, and estimate what it would cost.

The inputs change depending on the category. The logic stays consistent.

Quantifying Cyber Risk

Cyber risk quantification starts with understanding which threat actors and attack types are most relevant to your industry.

A healthcare company faces very different threats than a financial services firm. Your estimates should reflect your specific environment, not just industry averages.

The potential impact of a cyber event includes both direct costs, like incident response and recovery, and indirect costs, like customer churn, regulatory fines, and reputational damage. Leaving out indirect costs routinely leads organisations to underestimate their true exposure by a significant margin. Both belong in your calculation.

Quantifying Reputational Risk

Reputational damage does not come with an invoice. But it shows up in measurable places: customer retention rates, sales close rates, and, for publicly traded companies, stock price movement.

The practical approach is to build a financial proxy. Estimate what a meaningful drop in customer retention costs your business annually.

Model what a fall in deal close rate looks like across your sales pipeline over a quarter. Use those as your reputational loss range for a given incident. A breach that makes the news consistently costs far more than one that stays contained and quiet.

Your model should reflect that difference. The Ponemon Institute publishes research on the long-term reputational and financial consequences of public-facing security incidents that can inform your proxy estimates.

Quantifying Vendor and Residual Risk

Every vendor that holds your data or runs critical processes carries financial exposure. When they fail, part of that loss often lands on you.

Our third-party risk assessment framework gives you a structured starting point for mapping vendor exposure.

Residual vendor risk is what remains after you have applied your own controls and contractual protections. You measure it by starting with the full financial impact of a vendor failure, then factoring in how much your controls and contracts actually reduce that exposure.

What remains is the number you monitor and decide whether to accept, insure against, or push the vendor to reduce further.

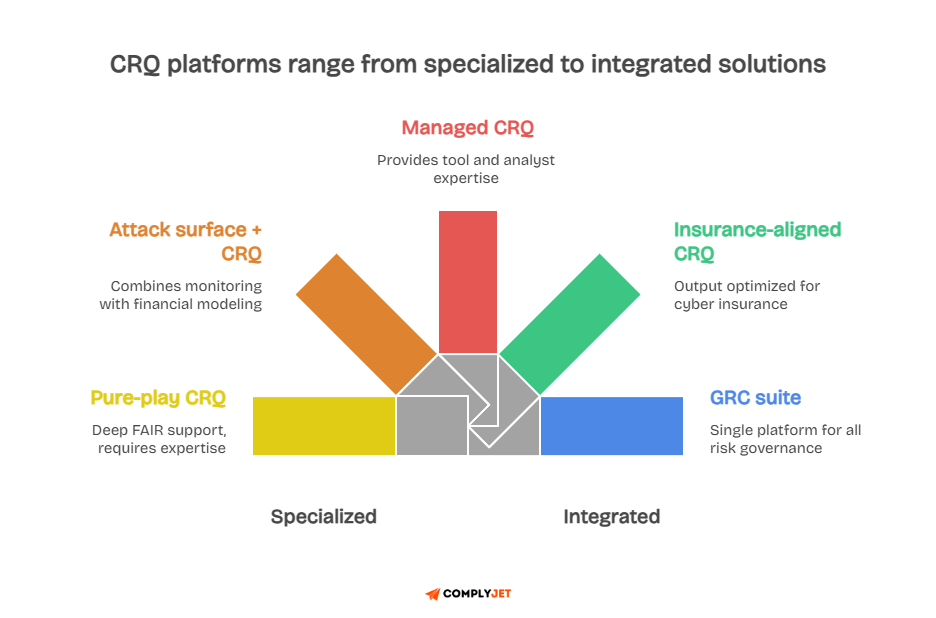

What to Look For in Cyber Risk Quantification Tools and Software

The market for cyber risk quantification tools has matured significantly in the last five years. There are dedicated platforms built specifically for CRQ, as well as broader risk management tools that include quantification modules.

How These Tools Actually Work

Most platforms operate in two layers. The first ingests data: asset inventories, threat intelligence, vulnerability scan results, and historical incident data. The second runs probabilistic models across that data to produce financial loss estimates.

The quality of the output depends entirely on the quality of the input. Tools that connect directly to your existing security stack produce better estimates than tools that rely on manual data entry.

Integration capability is one of the most important criteria when evaluating any platform.

The FAIR Institute maintains a list of vendor solutions that have been assessed for FAIR methodology compatibility, which is a useful shortlist when you start your evaluation.

Navigating the Market

The market includes large enterprise platforms, mid-market solutions, and specialised boutique tools.

The best option for your organisation is not necessarily the most complex one. It is the one your team will actually use consistently.

Three questions cut through most of the noise when evaluating options.

- Does it support the methodology your program uses?

- Does it integrate with your existing security data sources?

- Does it produce outputs that your leadership can understand and use for decisions?

If all three answers are yes, you have a strong candidate.

One thing most teams discover mid-evaluation is that the tool conversation quickly exposes a deeper gap: unclear compliance posture, missing evidence, and controls that exist on paper but have never been tested. When your controls are undocumented, and your evidence is scattered across spreadsheets and email threads, the numbers your quantification tool produces will reflect that mess.

That is the gap ComplyJet was built to close. It helps you:

- Maps your controls to recognised frameworks like SOC 2, ISO 27001, and HIPAA so you always know exactly where you stand

- Collects compliance evidence continuously in the background, without your team having to stop and gather it manually before every audit or assessment

- Surfaces control gaps before they become audit findings or blind spots in your risk model

- Gives you documented, tested, real-world control data to feed into your quantification tool, so your outputs are based on fact rather than assumption

- Builds a compliance foundation that holds up when a regulator, insurer, or acquirer scrutinises your risk numbers

- Keeps your compliance data accurate and current as your environment changes, so your CRQ program does not drift out of sync with reality

Talk to the team to see how it fits into your current setup before you start shopping for a quantification platform.

Specialised Platforms

Platforms like FortifyData are built for continuous cyber risk assessment and financial impact modelling.

It combines attack surface monitoring with risk scoring, making it relevant for organisations that want ongoing visibility into their cyber risk rather than point-in-time snapshots.

The best platforms do not just tell you your current risk level. They track how that level changes as your environment and the threat landscape evolve together.

For organisations new to CRQ, the service layer often matters as much as the software. Look for vendors that offer methodology guidance and ongoing calibration support, not just a tool license.

Explore our guide to evaluating CRQ service providers alongside their software.

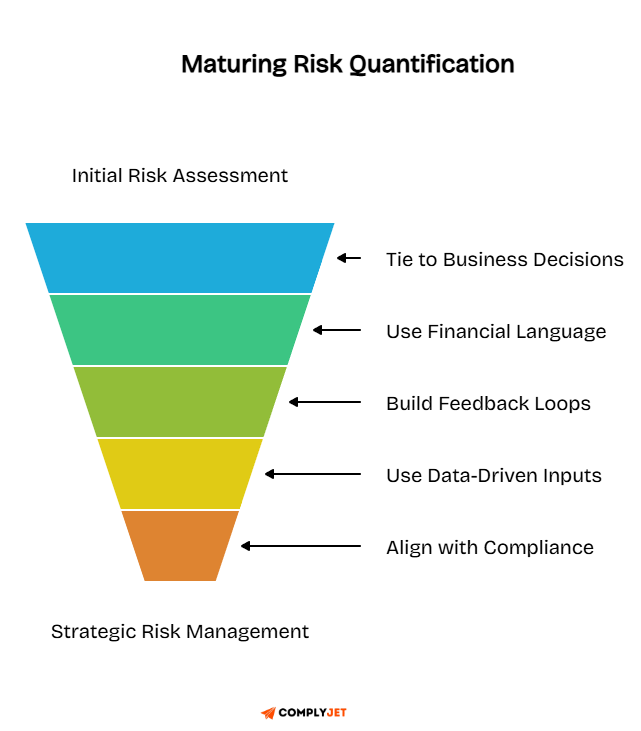

Best Practices for Risk Quantification in a Business

The organisations that get the most value from risk quantification share a few common habits. These are practical disciplines that keep the program grounded and useful over time, not grand strategies.

1. Tie Quantification to Business Decisions

Risk quantification only matters if it influences decisions. Build your program around the decisions your leadership actually needs to make: where to invest in controls, how much cyber insurance to carry, and which vendor relationships carry the most financial exposure.

Apply a simple test before any control investment gets approved: does the cost of this control actually outweigh the financial risk it reduces? If a $500K investment only reduces your expected annual loss by $200K, that is not a sound investment.

Risk quantification gives you the evidence to make that call before you commit the budget.

2. Build Feedback Loops and Stay Current

A model that is not regularly updated becomes misleading over time. Threats evolve, your environment changes, and estimates that were solid six months ago can drift significantly from reality.

Stay current with the latest threat intelligence by integrating live feeds into your quantification inputs.

When an incident occurs, compare your model's prediction to what actually happened. If the gap is large, update your inputs.

Over time, this calibration process compounds. Each update makes the next estimate more accurate, and the decisions that follow more reliable. The NIST Cybersecurity Framework's continuous monitoring guidance provides a practical structure for building this feedback discipline into your program.

3. Communicate in Financial Language

Technical metrics do not move boards. Boards speak in financial terms. For every major risk scenario, lead with three numbers: the minimum likely loss, the most probable loss, and the worst credible outcome. Those three figures give leadership everything they need to make a proportionate decision without requiring them to understand how the model works.

When risk data is presented in financial terms, trade-offs become clearer.

Leadership can weigh cybersecurity risks against other business priorities using the same units and the same logic they use for every other major decision.

Frequently Asked Questions About Risk Quantification

What is a risk quantification report?

A risk quantification report is a document that presents the financial output of your risk analysis. It shows the probable loss range for each scenario you modelled, the likelihood of each outcome, and the controls that would most reduce the exposure.

The report is designed for executive and business audiences, not technical teams. A well-built report translates model outputs into board-ready language: dollar ranges, probabilities, and prioritised recommendations that leadership can act on without needing to understand the underlying model.

What are the 5 steps of risk management?

The 5 steps of risk management are: identify the risk, assess the risk, evaluate and prioritise, treat the risk, and monitor and review. Risk quantification primarily supports the assessment and evaluation by turning identified risks into financial estimates.

Each step feeds the next. You cannot assess a risk you have not identified. You cannot treat a risk you have not evaluated. And without monitoring, your treatment decisions become outdated.

The cycle is continuous, not a one-time exercise. The ISO 31000 standard provides a globally recognised structure for operationalising all five points.

What are the 3 C's of risk?

The 3 C's of risk are Consequence, Chance, and Control. You assess what happens if a risk materialises, how likely that is, and how much control you have over the outcome.

In risk quantification, all three translate into model inputs. Consequence becomes your loss magnitude. Chance becomes how often the threat occurs. Control becomes the probability that your defences hold when tested. The FAIR framework maps directly onto this structure, making it a natural methodology choice for businesses applying the 3 C's in practice.

What are the 5 C's of risk assessment?

The 5 C's of risk assessment are Context, Cause, Consequence, Control, and Communication. Context defines the operating environment. Cause identifies the threat source. Consequence estimates the impact. Control evaluates your defences. Communication ensures the findings reach the right people. Effective risk quantification brings financial precision to all five stages.

The NIST Risk Management Framework maps naturally onto this structure, particularly in how it handles context-setting and communication as formal program components rather than afterthoughts.

What are the 4 types of risk categories?

The 4 types of risk categories in most enterprise frameworks are strategic risk, operational risk, financial risk, and compliance risk. Some frameworks add a fifth for reputational risk, which is increasingly treated as its own category.

Cyber risk spans multiple categories simultaneously. A significant breach can trigger operational disruption, financial loss, compliance exposure, and reputational damage all at once. That is why cyber risk quantification often produces multi-category loss estimates rather than a single combined figure. The COSO ERM framework provides a useful taxonomy for organising these categories at the enterprise level.

What are the 7 steps of the risk management process?

The 7 steps of the risk management process are: establish context, identify risks, analyse risks, evaluate risks, treat risks, communicate and consult, and monitor and review.

This expanded version treats communication and context-setting as formal parts rather than background activities.

Risk quantification maps primarily to steps three and four, where analysis and evaluation happen. But the financial data produced in those Hshould inform every other step in the cycle. Better data at the analysis stage produces better decisions at every stage that follows. ISO 31000 aligns directly with this 7-step structure and provides implementation guidance for each stage.

How do human risk quantification tools work?

Human risk quantification tools measure the risk introduced by employee behaviour. They analyse factors like phishing susceptibility, password hygiene, insider threat indicators, and security training outcomes. The output is a financial estimate of the exposure created by human-layer vulnerabilities.

They work by modelling the probability that a human-driven event, like clicking a phishing link, results in an actual loss. That financial estimate can then sit alongside your technical risk estimates in your broader enterprise risk program.

How do you aggregate risk quantification across an organisation?

Aggregating risk quantification means combining individual scenario-level estimates into an enterprise-level view. This is more complex than simply adding up numbers because risks are not always independent of each other. A single breach can trigger multiple loss categories at the same time.

Most FAIR-based platforms handle aggregation automatically by accounting for the relationships between scenarios. Without that correlation modelling, organisations tend to overstate their total exposure.

In which step will you quantify risk, cost, and time?

You quantify risk, cost, and time during the risk analysis step, which is the third step in the 7-step risk management process. At this point, you have identified your risks and are estimating both the financial cost and the time-to-impact of each one.

Time matters because some losses materialise quickly while others unfold over months. A ransomware event hits fast. Reputational damage can compound over quarters.

Your model should capture both dimensions so leadership gets an accurate picture of how and when losses are likely to occur. NIST SP 800-30 provides specific guidance on how to structure this time-sensitive analysis within a formal risk assessment.

How do you quantify residual vendor risk?

Start by estimating the full financial impact of a vendor failure on your operations. Then factor in the risk reduction provided by your existing controls and contractual protections. What remains is your residual exposure for that vendor relationship.

Review and update this estimate whenever a vendor's role changes, their control posture shifts, or your dependency on them increases. Residual vendor risk is not a number you calculate once and file away. It needs to reflect the current state of the relationship.

From Guesswork to Governance

Risk will always exist. That is not the problem. The problem is running a business where the people making financial decisions and the people managing threats are speaking completely different languages. Risk quantification fixes that. It turns vague threats into financial estimates your leadership can actually act on.

This guide has covered the full cycle.

The insight most teams miss is that the goal is not a perfect model. It is a better decision made this quarter than last. Cyber risk quantification improves with repetition.

Your first model will be rough. Your tenth will be significantly more reliable. What is not acceptable is waiting for perfect conditions before you start.

The organisations that invest in this work do not just manage risk better. They compete better. They close deals faster, satisfy regulators without scrambling, and make budget decisions based on evidence rather than whoever argued loudest in the last meeting. They find their worst risks before those risks find them.

That is the difference between a risk program that earns a seat at the table and one that gets called in after the damage is done.

Start with one scenario. Apply the framework and commit to the feedback loop, or just talk to our experts to get started with your first risk quantification assessment!